AI Under the Hood: How to Build a Hallucination-Free Bank

The new direction of digital banking is a proactive, context-aware user experience (UX) – Sentient Banking – yet relatively little is said about the greatest risk of the technology required for it: hallucinations. Generative models are prone to hallucination, i.e., they generate confident, false, fabricated, or illogical information not justified by its training data, which is unacceptable for a financial institution. For AI to be integrated safely, the model's probability-based, creative thinking must be strictly isolated from the rule-bound operation of core banking systems. The large language model's (LLM) sole task should be understanding the customer's intent; compiling screens and executing transactions must remain the purview of a pre-audited, closed system.

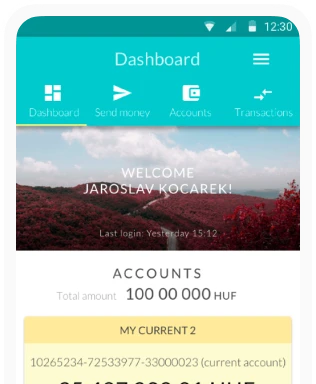

Digital banking is expected to undergo a transformation, the likes of which we haven't seen since the advent of smartphones. Slowly but surely, we are leaving behind the traditional self-service paradigm, where users are expected to navigate complex, static menu systems to perform a simple operation. The interfaces of the future will no longer wait for the user to figure out which submenu is hiding the card limit modification. Instead, we are entering the era of Sentient Banking, where the interface adapts to us rather than the other way around.

Here, the application doesn’t merely serve passively; it proactively understands our intentions as well. The user interface (UI) transitions from a classic, direct instruction-based system to the logic of intent and dialogue.

But there is a catch. Generative AI systems love to and are prone to hallucinate. While a flawed paragraph in a text or a sixth finger in an image generator is just a funny mistake, displaying a fictitious account balance or a non-existent feature on a banking interface means disaster and a loss of credibility. In the banking sector, reliability, strict auditability, and flawless regulatory compliance are fundamental requirements.

The End of Vibe Coding: Enter Context Engineering

The implementation of a context-aware interface begins where traditional frontend development ends: with the radical, comprehensive transformation of enterprise design systems. Until now, these systems have been built exclusively for designers and developers, providing visual guidelines on things like colors, button border radii, and typography. However, when AI enters the process, this traditional documentation will not be enough.+ If we just tell an LLM to create a transfer interface, the result might be aesthetically pleasing, but it will have nothing in common with strict banking regulations or the carefully crafted brand identity.

In industry jargon, this early, technologically experimental phase is called vibe coding. This is the process where developers try to generate code using open, unstructured prompts based purely on aesthetic vibes and associations. Although vibe coding is spectacular, fast, and fun for prototyping, it is explicitly risky in a live enterprise environment, because it prioritizes creative expression and randomness over precise, reliable execution.

We must transition to the world of context engineering, where AI works from highly precise, machine-readable metadata

We must transition to the world of context engineering, where AI works from highly precise, machine-readable metadataThe solution is the implementation of a Context-Based Design System (CBDS). As TJ Pitre, founder of Southleft and the creator of the CBDS concept, put it: AI's favorite food is context. Thus, instead of vibe coding, we must transition to the world of context engineering, where AI works from highly precise, machine-readable metadata. Here, design tokens no longer store merely visual values (e.g., "color-red-600"), but are directly tied to semantic intent (e.g., "intent-fraud-alert"). The AI understands the situation perfectly, but we have wisely taken the actual drawing tool and the right to push pixels out of its hands.

The Safety of the Adaptive Kit and the Walled Garden

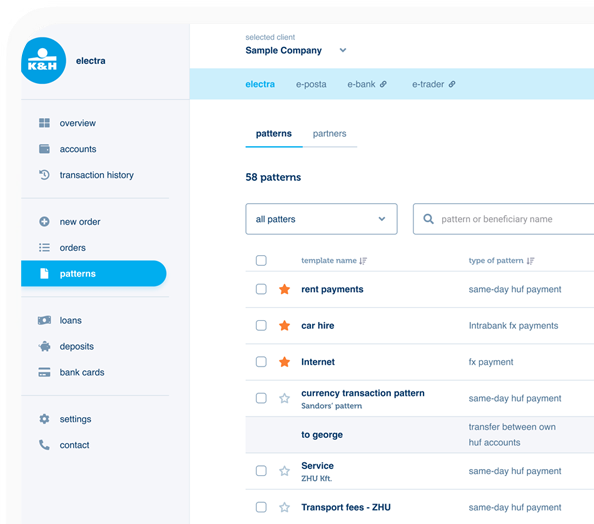

To physically display the customer intent decoded by the AI on the screen, Adaptive Kit architecture must be introduced – a concept software development borrowed from industrial design and modular architecture. In digital banking, this toolkit works exactly like a gigantic, but strictly controlled, enterprise-level Lego set. Every single building block – be it an interactive spending bar chart, a complex transfer form, or a biometric authentication window – has already been pixel-perfectly created by developers.

The most important difference compared to traditional development is that these modules have already been pre-audited individually by the legal, security, and compliance departments. When the LLM interacts with the user and responds to a request, it is strictly forbidden to generate raw code, HTML, CSS, or executable JavaScript. The LLM's sole job is to select and parameterize context-appropriate elements from this pre-validated component library with the correct data.

Since physical coding is not available to it, the AI operates in maximum safety within a walled garden containing pre-approved experiences. With this method, the AI never oversteps its bounds. Thus, even if the machine wanted to invent a non-existent, risky crypto-purchasing feature or an unauthorized loan request button, there is no Lego block in the set for it to build it on the user's screen.

The JSON Bridge and Server-Driven UI (SDUI)

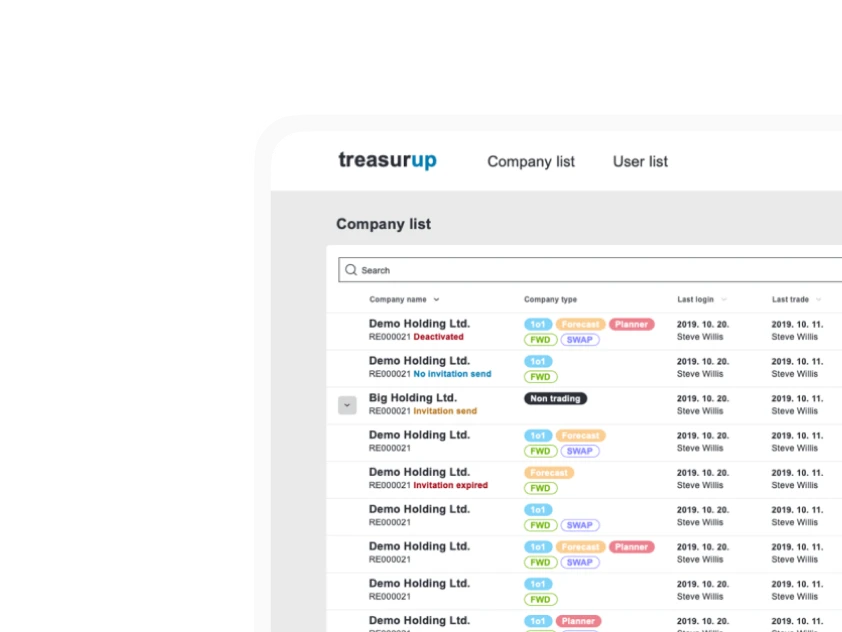

Suppose we have an intelligent, intent-understanding LLM and a kit full of audited UI elements. How does this all come together in real time into a smooth, functioning screen? For the application to dynamically adapt to the context within seconds, banks must abandon traditional client-side routing, where the rigid sequence of pages and submenus is hardcoded and burned into the application.

Instead, it is worth using a modern architecture called SDUI, which is connected to the AI by an impenetrable data barrier known as the JSON Bridge. The essence of this technology is that only the backend (the server) dictates the entire structure, layout, and content of the interface, while the mobile app running on our phone merely executes the instructions as an intelligent canvas. Meta, Airbnb, and Spotify have been using this method for years for quick updates; in the banking sector, however, it is the key not only to development speed but also to security.

The process under the hood consists of four steps. After the initial intent analysis, the LLM selects the components from the Adaptive Kit. Then the backend generates a strictly bound JSON schema (a lightweight, text-based data packet) that dictates exactly which chart should be populated with what numbers. Finally, the mobile app receives this packet, pulls its own native elements from memory, and instantly builds the screen.

Data Privacy and Schema Engineering: What Does the AI Actually See?

Security is not only about preventing interface crashes, it is also about protecting the most sensitive financial data. Many rightly ask: If an LLM analyzes my spending, does the AI know everything about me? In Sentient Banking systems, data protection takes place even before the JSON Bridge, in what’s called a data masking layer. The LLM running in the cloud actually never sees the full reality, only the anonymized fragments necessary to perform the task.

Security is also about protecting the most sensitive financial data

Security is also about protecting the most sensitive financial dataWhen you ask, "How much did I spend at my favorite Italian restaurant last week?", the internal banking system scrubs the query of personally identifiable information (PII) before it even reaches the LLM. The AI doesn’t know your name, your exact balance, or your card number. It merely receives a tokenized request, to which it reacts by selecting the "restaurant spending" component. The actual, sensitive data is injected into the JSON data packet at the very last moment on the secure backend server, long after the AI has done its job.

If the AI were to go crazy due to some anomaly and try to force a hallucinated, irregular component into the response, Schema Engineering immediately enters the picture. Advanced validation tools, such as BAML (Boundary ML), are capable of verifying the output. If the format or structure of the data packet returned by the AI deviates even a single millimeter from the predefined banking schema, the system drops the request instantly, right at the server level. There is no bad code, no error message, no chaos on the user's screen.

Organic State Management: The Cascade of Intent

An intelligent, context-driven banking interface cannot behave like a static, dumb website. The interface must appear as a collaborative digital partner that adapts to new, unexpected information in moments. In traditional banking apps, navigation is rigid. If I click a button, a new page downloads that is completely independent of the previous one. In the Sentient Banking model, however, contextual awareness instantly and organically runs through the entire application. We call this the Cascade of Intent.

Imagine the following scene. Someone is sitting in a café in Paris. Their banking app is in its relaxed, "exploratory" state. On the screen, there’s their holiday spending, a premium credit card offer, and their recent transactions. They suddenly notice trouble and tell the app: "I think my bank card was just stolen on the Métro." In a fraction of a second, the AI analyzes the situation and reclassifies the application's intent state to the highest, "critical security" level.

As the server sends over the new JSON instruction, the emergency protocol instantly cascades through the system. The system recognizes that credit offers and spending statistics are distractions in such cases, so these modules immediately disappear from the screen, and the card-blocking module rises to the very center. The underlying visual rules (design tokens) also switch: soothing pastel colors are replaced by high-contrast, warning shades, and the fonts become thicker. This visual focusing ensures that even an agitated user with trembling hands instantly finds the solution. The transition is not like a website refresh, but an organic shapeshifting.

Conquering Latency and Neuro-Haptic UX

This multi-layered back-and-forth communication, however, exacts a heavy technological toll, known in IT as latency. LLMs are computationally heavy, cumbersome systems. While a mobile app reacts to a traditional button press in 50 milliseconds, in an AI-driven system, understanding the intent, generating the JSON schema, and communicating with the network can take up to several seconds. According to UX research, any wait exceeding 400–800 milliseconds ruthlessly destroys the illusion of real-time interaction.

For the machine to appear as a fluid, lag-free partner, engineers must deploy server-side tricks that significantly reduce GPU memory waste. Speculative decoding helps a lot as well. This is where a smaller, dumber, but lightning-fast language model tries to predict the large AI's response, along with semantic caching, which recognizes the thousands of routine daily queries from users and instantly sends the finished screen to the phone, bypassing the LLM completely.

The most spectacular weapon, however, is the streaming of structured data. The backend doesn’t wait until the entire JSON data packet is ready, but sends it to the phone continuously, drip-feeding it. This way, the top part of the screen is already being drawn while the bottom part of the data is still traveling from the server. All of this can be complemented with feedback. While the machine works, the phone provides haptic signals (e.g., vibrations, motions, or pressure), which have been proven on a neurological level to reduce the user's subjective waiting time.

The Escalator Principle: Safety Even During a Power Outage

Despite multi-million-dollar infrastructure, validation layers, and optimizations, a banking system must always prepare for the worst. Cloud providers can go down, API connections can slow down, and security filters can stall the process. In a financial app, a "Service currently unavailable" message is not merely an annoying UX error, but a serious problem that can even draw severe regulatory penalties.

This is where one of the most important analogies of UX design comes into play: the escalator principle. If an escalator breaks down and stops, it doesn't trap people, or transform into an unclimbable wall; instead, it simply downgrades into a traditional, albeit more tiring, but perfectly usable set of stairs. It loses its convenience feature, but fully retains its primary usability, namely getting us upstairs or downstairs.

This graceful degradation is coded into the system right from the very first lines of code

This graceful degradation is coded into the system right from the very first lines of codeIn the Sentient Banking architecture, this graceful degradation is coded into the system right from the very first lines of code. Since the AI's under-the-hood brain is physically severed from the user's phone, a complete collapse of the intelligent layer doesn't crash the mobile app itself. If the backend detects that the LLM is throwing an error, or if the response time crosses a critical threshold, the system cuts off communication with the AI and redirects the query to a traditional endpoint. Although personalized predictions disappear and the interface becomes more static, checking an account balance or freezing a card will continue to work flawlessly.

Free Within Boundaries

Ultimately, the banking integration of AI is built on an exciting contradiction. The essence of generative models is free, probability-based creativity, while the financial sector's is predictability. These two worlds can only meet safely if we strip AI of the capability for independent action, and steer it onto a closed track built on strict rules.

With the implementation of CBDSs and SDUIs, the AI no longer needs to be a developer or a visual designer. Instead, we appoint it as a dispatcher, directing traffic through a secure data barrier, selecting exclusively from pre-verified, legally audited components for the user.

The bank of the future will not be smart because it unleashes an omniscient AI. Quite the opposite. The secret lies in combining discipline and flexibility. Building a proactive, human-centric service is only possible if, in the background, strict security rules and pre-fabricated, audited elements keep the system in check. Ultimately, Sentient Banking does not mean breaking the rules, but rather applying them much more intelligently.