Proof, Not Promises: The Product Design Challenges of AI-Based Payments and Programmable Money

The intersection of artificial intelligence (AI) and financial technologies is a dynamically developing area, yet it holds complex challenges. When technological innovations step out of the laboratory environment and have to prove themselves in the real market with live data, well-sounding promises and spectacular demos are no longer enough. This critical transition – from experimentation to evidence-based, user-centric product development – was explored at the roundtable discussion held at the Tokyo stop of the Global FinTech Network (GFTN) Forum.

The goal of the meeting was to map the real obstacles of integrating Agentic AI into autonomous financial systems. The composition of the panel reflected the multifaceted nature of the problem: leaders of the main British (FCA), Singaporean (MAS), and Japanese (JFSA) financial supervisory authorities, a professor from Cambridge University, and representatives from the technology and digital product design sector – including SAP, Kore.ai, and Ergomania – sat at the same table on the panel.

Based on industry experience, the cornerstones we must build into future financial interfaces during digital product design and user experience (UX) strategy are clear.

Beyond Technological Illusions: The Anatomy of Product Development Failures

In the current phase of software development, the greatest danger is technological blindness. Industry forecasts indicate that AI projects designed for autonomous task execution are expected to fail in over 40% of cases in the coming years. This is rarely because of mathematical shortcomings in the algorithms. Rather, it is because teams fundamentally misunderstand the business problem, lack adequate quality data, or chase the latest technological hype instead of solving real user needs. In addition, the infrastructure necessary for secure operation and troubleshooting is often completely missing.

Participants at the panel

Participants at the panelWhen AI meets financial product development, the very first design principle we must establish is that the fundamental goals of regulation and user safety do not change with the advancement of technology. Whether a human administrator or an autonomous language model executes a transaction is irrelevant; the prohibition of deception, the transparency of information, and the strict protection of data remain equally critical expectations. AI merely increases the complexity and speed of processes, which demands magnitudes greater discipline from product designers as well.

Design Characteristics of Stable and Turbulent Environments

During the testing of digital financial systems, it is essential to distinguish between stable and turbulent regulatory environments. In a stable area – such as traditional anti-money laundering (AML) or standard retail lending – the rules are clear and static. In these cases, AIcan identify anomalies with relatively high reliability.

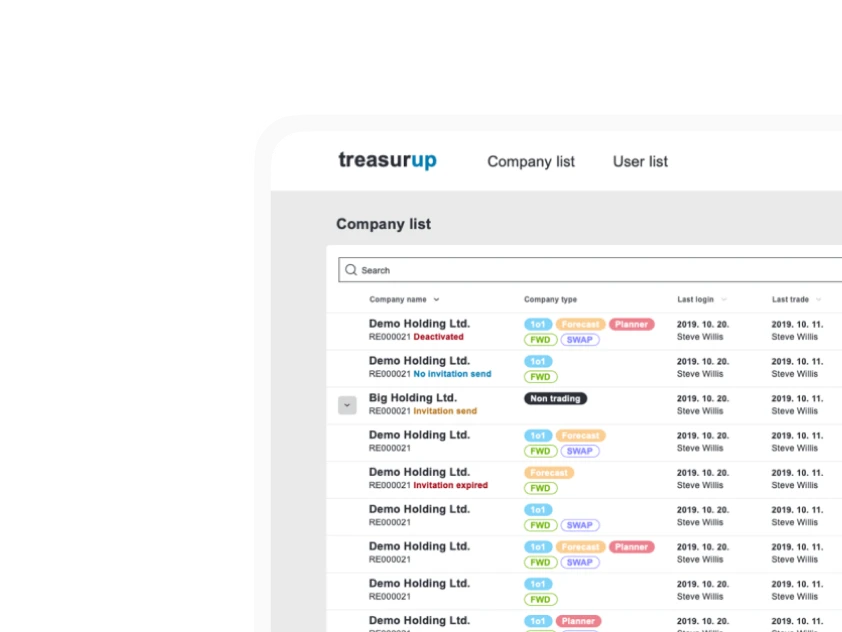

In contrast, in a turbulent, constantly shaping area – such as crypto assets, the regulation of programmable money, or newly emerging digital payment routes – the performance of large language models (LLMs) trained on massive, general datasets can significantly deteriorate. From a UX perspective, we must take into account that universal out-of-the-box solutions are the least reliable, exactly where precision is needed most. In such complex cases, the application of narrower focus small language models (SLMs) specifically trained on financial taxonomy is much more effective, resulting in fewer false alarms and user frustration.

The Delicate Balance of Business ROI and User Trust

In an enterprise environment, the basic condition for scaling innovation is the clear justification of business return on investment (ROI). During laboratory tests, the focus is almost always on increasing efficiency and cutting administrative burdens. Business success alone, however, is not enough if trust, verifiability, and transparency are missing. No matter how impressive a graphical interface is, if the backend system is not stable, the entire architecture can easily collapse in a crisis situation.

To prove reliability, communicating observability on the interfaces themselves is essential. Users and auditors must understand step-by-step based on what data and parameters the system made a certain decision, and the lines of responsibility in case of an error must be clarified. If these explanatory interfaces are missing from the design, initial technological enthusiasm will lightning-fast turn into skepticism.

The Anatomy of Agent-Based Payments: The Challenge of Decoding Intent

Designing digital payment systems is AI’s strictest terrain. Here, irrevocable capital movements happen in fractions of a second, so even the smallest errors have immediate, severe financial consequences. The transition from assisted payments to autonomous processes can be compared to the development of modern self-driving cars. First, the system only makes suggestions (e.g., for the cheapest transaction route). Later, it is capable of performing routine processes. The ultimate goal is extended automation, but the possibility of human override – the imaginary steering wheel – must be provided at all times.

Decoding user intent makes this evolution a highly exciting and dangerous design task. If the system cannot interpret the input data while maximally considering the context, the result can be catastrophic. Let us imagine the realistic situation outlined at the roundtable: a user instructs their digital assistant to get them an authentic Japanese sandwich immediately. The agent must decide in milliseconds whether to order with a courier from the nearest import shop, or – completely misinterpreting the intent – book a flight to Tokyo, maxing out the credit card. Excessive machine autonomy and misinterpreted intent can immediately zero out the customer's balance, meaning the permanent loss of trust in the product.

The Three Pillars of Secure Autonomous Design

To eliminate risks, reliable autonomous payment interfaces must be built on a strict control architecture, which must also be kept in mind throughout the user interface (UI) design:

- The gatekeeper function is provided by the approval phase, which guarantees that no autonomous system can initiate a live transaction without clear biometric or cryptographic identification proving prior human consent. The interface must make it clear exactly what the user is authorizing.

- Continuous monitoring acts as a kind of digital black box. It observes the agent's behavior in real time and immediately interrupts the process if it detects unusual patterns (e.g., if the AI attempts to invisibly break down an amount above the limit into smaller installments).

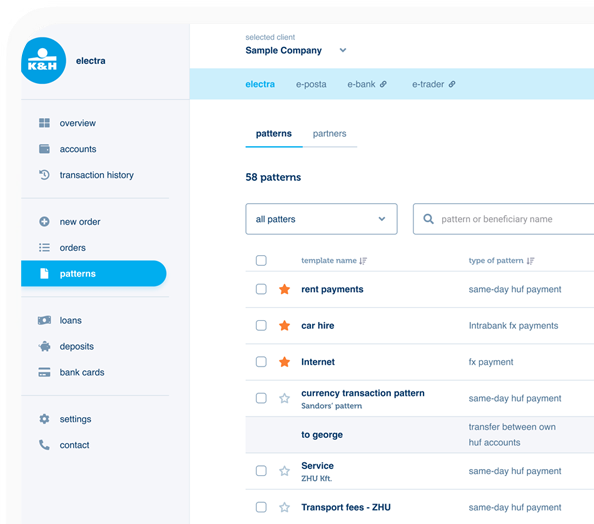

- The audit trail ensures that every machine decision made during complex transactions can be traced back to the original user command. Its visual representation is essential for building trust and settling potential disputes.

Consent Decay as a Critical UX Challenge

When designing long-term agent-based systems, one of the least explored UX problems is consent decay. When a user entrusts an intelligent system with a complex task – for example, to book a service every year, link insurance to it, and settle the invoice – the initial preferences can radically change over the years.

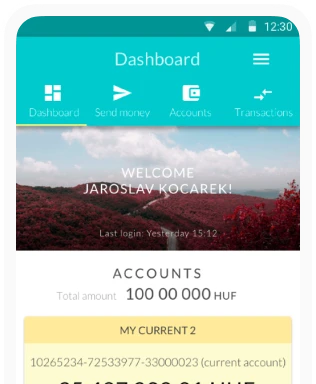

The customer may become dissatisfied with the service provider but forget to update the deeply hidden settings, and the machine mechanically executes the previous instruction. A priority design task is creating dashboards that, avoiding "alert fatigue," remind users of active delegations. It must be made possible to quickly modify preferences depending on the context; transparent dispute resolution interfaces must be built that guarantee the peace of mind accustomed to in traditional bank card chargeback processes.

Inclusion or Exclusion in the Age of AI

Although AI theoretically offers a huge opportunity to broaden financial inclusion, in practice, it also harbors serious systemic dangers. Data-driven systems based on machine learning are by nature optimized for majority patterns and average user behavior. Anyone who does not fit into this narrow mold can be excluded by the machine in moments.

The most illustrative physical analogy for this is the modern airport e-gate. This device brilliantly speeds up the passage of average passengers, but immediately flashes red and indicates an error if a significantly taller or shorter person tries to use it, simply because the camera fixed at a specific angle cannot properly identify their face.

A similar exclusionary phenomenon takes place in digital finances as well. Workers in the gig economy with their hectic incomes, guest workers using shared devices, or customers with absolutely no credit history (thin file) fall outside traditional data patterns and immediately appear as a fraud risk. Responsible, ethical UX design cannot focus exclusively on happy paths. In-depth research of edge cases, seamless fallback mechanisms to a human operator, and transparent justification of decisions are essential for creating equitable financial interfaces.

Programmable Money and Machine-Readable Regulation

The integration of new technologies, such as distributed ledger technologies (DLTs) and programmable digital currencies, sets the bar even higher for designers. In this pioneering field, the biggest security challenge is establishing clear accountability. Since an algorithm obviously cannot be held accountable, every transaction running on a smart contract must be irrevocably linked to a verified natural or legal person during Know Your Customer/Know Your Business (KYC/KYB) processes. All this must be done in a way that does not stall the automation experience but complies with the strictest international legal regulations.

However, securely scaling autonomous systems is impossible without transforming the format of the regulatory environment. AI agents cannot interpret policies written in long, complex legal language in PDF format. Product developers and authorities must jointly create the digital twin of regulations: computational regulation. If compliance rules are integrated into transaction processes as code in real time, the possibility of error stemming from human interpretation can be drastically reduced, and risk assessment can become instantaneous.

The Psychology of Trust Design and the Principle of Graduality

No matter how robust a technological infrastructure is in the background, during product design we must not forget that users are by default suspicious and distrustful of new and overly complex systems. This distrust cannot be resolved by getting them to accept long legal declarations in fine print.

The key to building genuine, deep trust on digital interfaces is the principle of strict graduality. Just as in human relationships, in the case of machines, we test competence in small steps. If we ask an unknown teenager who moved in next door to buy a newspaper for us, we are not interested in their exact route, only that they successfully bring the paper and return the change. If this low-risk transaction succeeds several times flawlessly, later we will entrust them with paying the bills as well. If they do not make a mistake in that either, only after a long time do we dare to entrust them with the key to our home during a vacation.

Digital product developers must replicate this same psychological pattern when introducing autonomous systems. Users must not be confronted with high-risk processes demanding full autonomy immediately after registration. First, they must entrust small, harmless micro-tasks to the AI – for example, the visual categorization of bank statements or the organization of payment notifications. Customers must be provided with the opportunity to loosen control entirely at their own pace, corresponding to their risk tolerance. This learning curve can be supported by well-designed onboarding processes and visually clear delegation switches that can be revoked at any time.

Focuses of Future Development

In summary, agent-based payments, generative AI, and programmable money are revolutionizing the financial sector. The successful, widespread transition, however, is not merely a data engineering task, but at least as much a challenge of digital product design, UX, and human behavior research. Instead of chasing technological hype, teams must focus on tangible evidence, the exceptionally thorough handling of edge cases, and respect for user autonomy.

Sustainable market success can only be achieved with a logically structured information architecture, immediate human override points, and systems that apply the psychology of gradual trust-building. The direction is clear: technology must adapt to the nature of human trust, and not the other way around.